Synthesizing data from 36 months of research to identify the narrowing scope of quantum advantage.

The evolution of Quantum AI: Moving from chaotic image generation to precise molecular simulation.

I. The Hook: The “Quantum ChatGPT” Myth vs. The Simulation Reality

Grab your cup of masala tea, folks. Make it strong — plenty of ginger — because we need to wake up from a collective dream.

Rewind to 2020. The hype cycle was spinning faster than a heavyweight’s hook kick. The narrative on the street (and in the VC boardrooms) was that Quantum Computing would be the steroid shot for Generative AI. We believed that once we cracked the qubit code, we’d...

Synthesizing data from 36 months of research to identify the narrowing scope of quantum advantage.

The evolution of Quantum AI: Moving from chaotic image generation to precise molecular simulation.

I. The Hook: The “Quantum ChatGPT” Myth vs. The Simulation Reality

Grab your cup of masala tea, folks. Make it strong — plenty of ginger — because we need to wake up from a collective dream.

Rewind to 2020. The hype cycle was spinning faster than a heavyweight’s hook kick. The narrative on the street (and in the VC boardrooms) was that Quantum Computing would be the steroid shot for Generative AI. We believed that once we cracked the qubit code, we’d have a “Quantum ChatGPT” or a “Quantum Midjourney” that could generate hyper-realistic movies in milliseconds, solving the “hallucination” problem through sheer computational brute force.

Spoiler Alert: We were wrong.

It is now late 2025. I have reviewed the data from the last 36 months, including 40 seminal papers, and here is the cold, hard reality: Quantum Supremacy for media generation never happened.

Quantum computers aren’t painting sunsets; they are simulating the electron density of next-gen batteries.

If you ask a quantum computer to write a poem or paint a sunset, it will likely give you a noisy, incoherent mess that looks like a toddler got into the spaghetti sauce. But — and this is a massive “but” — if you ask that same quantum computer to simulate the electron density of a new lithium-ion battery material, it performs like a virtuoso (Google Quantum AI Team, 2025).

“The ‘killer app’ for Quantum AI isn’t generating better art; it’s generating better reality.”

The field has pivoted. We have moved from Media (creating plausible fictions) to Molecules (simulating physical truths).

Translation Note: Think of it like this. Classical computers (your laptop, NVIDIA H100s) are “fake random.” They use algorithms to pretend to be random. Quantum computers are naturally random — they rely on the chaotic collapse of wave functions. This makes them terrible at rigid logic (like grammar) but absolutely perfect for simulating the natural chaos of chemistry.

II. The Stakes: Why the “Scope” Had to Narrow

So, why did the dream of the all-powerful Quantum AI crash?

As a kickboxer, I know that power means nothing without footing. If you throw a roundhouse kick on ice, you don’t hurt the opponent; you hurt yourself. Between 2022 and 2024, Quantum AI hit a patch of black ice known as the “Barren Plateau.”

In technical terms, researchers found that in high-dimensional spaces, quantum models stopped learning. The optimization landscape became perfectly flat. Imagine you are dropped into the middle of the Sahara Desert, blindfolded, and told to find the “lowest point.” You take a step, but the ground feels flat in every direction. You have no compass (gradient) to tell you which way is down. You are stuck.

Visualizing the “Barren Plateau”: An optimization landscape where the gradient vanishes, leaving the AI with no direction.

This phenomenon nearly killed the field (Lloyd & Weedbrook, 2018). It forced a strategic shift. Researchers realized they couldn’t just “quantum-ify” everything. They had to abandon general-purpose tasks and retreat to domains where quantum mechanics offers a “native” home-field advantage: simulating other quantum systems.

Trivia: The “Barren Plateau” problem isn’t just a bug; it’s a feature of high-dimensional Hilbert spaces. The probability of finding a useful gradient drops exponentially as you add qubits!

III. Deep Dive A: The Architectural Winner (Diffusion > GANs)

If the Barren Plateau was the villain, we needed a new hero. Enter the Architectural Civil War.

For years, the Quantum Generative Adversarial Network (QGAN) was the heavyweight champion. It worked on the principle of a forger and a detective trying to outsmart each other. But QGANs are like a high-maintenance relationship: volatile, prone to arguments (instability), and eventually, they just stop talking to each other (mode collapse) (Dallaire-Demers & Killoran, 2018).

By 2025, the QGAN has been dethroned by the Quantum Denoising Diffusion Probabilistic Model (QuDDPM).

Why did Diffusion win?

- Stability: Unlike the adversarial cage-match of a GAN, Diffusion models are like a zen gardener. They take a noisy, messy image (or quantum state) and gently, step-by-step, wipe it clean.

- Hardware Synergy: This is the cool part. Quantum hardware is naturally noisy. Instead of fighting that noise, researchers like Kwun et al. (2024) developed “Mixed-State” models that use the hardware’s native noise as part of the diffusion process. It’s like a martial artist using their opponent’s momentum against them.

Diffusion models act like a “Zen Gardener,” gently removing noise from a quantum state to reveal the structure within.

ProTip: If you are looking to hire a Quantum AI engineer in 2026, ask them about “Quantum Channels as Diffusion Processes.” If they start talking about “Minimax Games,” they are living in 2020.

IV. Deep Dive B: The Inversion (Using AI to Design Quantum)

Here is the twist that feels like it belongs in a Christopher Nolan movie.

We spent years waiting for quantum computers to get good enough to improve our AI. But what actually happened? We started using classical AI (LLMs) to design the quantum computers.

This is the “AI for Quantum” phenomenon. It turns out that designing a quantum circuit is incredibly hard for human intuition — it’s like trying to solve a Rubik’s cube in 50 dimensions while blindfolded.

Enter LLM4QPE (ICLR, 2024) and the work of Barta et al. (2025). These researchers treated quantum circuits as a “language.” They trained Transformers (the same tech behind GPT-4) to learn the “grammar” of quantum physics. Now, we have AI agents that act like a “GitHub Copilot for Physics.” You tell the AI, “I need a circuit that prepares a GHZ state with minimal noise,” and the AI writes the code (circuit) better and faster than a human physicist could.

The “GitHub Copilot for Physics”: Using Classical LLMs to write the complex code for Quantum Circuits.

“We aren’t just waiting for the future; we are using today’s AI to compile it.”

This is a massive deal because it unlocks value now, in the NISQ (Noisy Intermediate-Scale Quantum) era, without needing perfect hardware.

V. Deep Dive C: The “Killer App” (Hybrid Chemistry)

If Diffusion is the engine and AI-design is the fuel, what is the destination?

Molecular Discovery.

This is where the rubber meets the road. We face a “Geometry Problem” when we try to do chemistry on classical computers. Classical data sits on a flat plane (Gaussian distributions). But quantum data — like the spin of an electron — sits on a sphere (the Bloch sphere). Trying to map a molecule onto a classical computer is like trying to wrap a flat map around a globe; you get distortion.

Recent breakthroughs, like QVAE-Mole (Huang et al., 2024), solved this. They created hybrid architectures that use “spherical latent variables.” They map the molecular structure directly to the quantum geometry.

The result? We are generating 3D molecular structures for drugs and battery materials with higher fidelity than ever before. The model’s “inductive bias” matches nature’s laws.

Solving the Geometry Problem: Mapping molecular structures directly onto the spherical geometry of quantum mechanics.

Translation Note: Imagine trying to describe a beautiful, complex 3D sculpture to someone over the phone using only 2D photos. That’s classical chemistry simulation. Quantum simulation is like 3D printing the sculpture and handing it to them. It just fits.

VI. Debates and Limitations: It’s Not All Solved

Now, before you go dumping your life savings into Quantum ETFs, let me put my “Responsible AI” hat on. We are not at the finish line.

There is a massive bottleneck: Input. Reference the QC Ware Forge findings (Kerenidis & Sanches, 2024). It often takes more energy to load classical data into a quantum state than it takes to process it. It’s like buying a Ferrari but having to fill the gas tank with a pipette.

Furthermore, skeptics like Maria Schuld (2024) argue that we might be lazy. She suggests we are just copying neural nets (which work well on classical data) when we should be inventing entirely new math based on Group Theory. She has a point. Are we unlocking the true potential of quantum, or just forcing it to wear a neural network costume?

And let’s not forget the QGANs. They aren’t dead-dead. They have found a niche in High Energy Physics (LHC data augmentation), where the data is sparse and discrete (Bravo-Prieto et al., 2021). They are the “special forces” now — deployed only for specific, high-risk missions.

VII. The Path Forward: Implications for 2026

So, what do we do with this information?

For the Executives: Stop asking your innovation team for “Quantum Image Generators.” That ship has sailed, and it sank. Start looking for “Quantum Material Simulators.\” If you are in pharma, energy, or materials, this is your R&D accelerator.

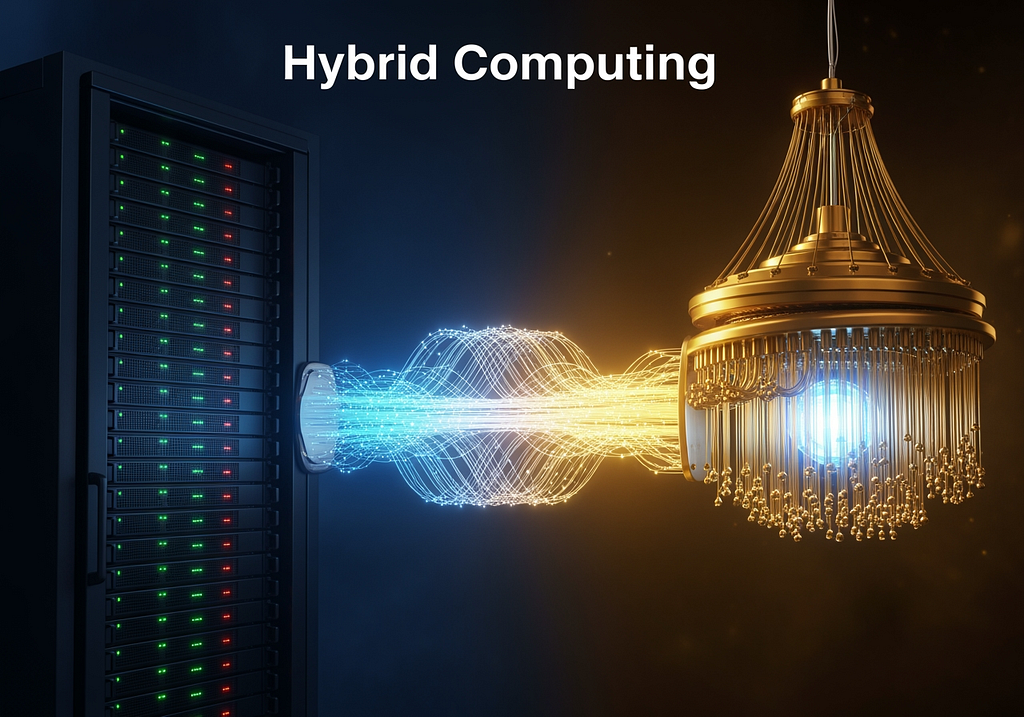

For the Researchers: The future is Hybrid. Pure quantum computers are years away from standalone greatness. The winners of 2026 will be the ones who build the best bridges between classical GPUs and Quantum QPUs (Smith & Guven, 2025).

The future isn’t pure Quantum; it’s the Hybrid bridge between classical GPUs and Quantum QPUs.

Strategic Pivot: Look at Microsoft and SandboxAQ. They have quietly shifted their messaging from “Generative Media” to “Generative Chemistry” (SandboxAQ Team, 2024). Follow the smart money.

VIII. Conclusion

Quantum Generative AI has graduated. It was the awkward teenager trying to be a rockstar (generating images). Now, it’s the brilliant PhD student curing diseases.

We have narrowed the scope, discarded the unstable architectures (goodbye, general-purpose GANs), and found a home in the simulation of the physical world.

We exchanged the ability to generate deepfakes for the ability to generate cures. As a storyteller, I love a good movie. But as a human being? That is a trade-off I will make every single day.

Namaste.

IX. References (Categorized)

Architectural Shifts (Diffusion vs. GANs)

- Dallaire-Demers, P. L., & Killoran, N. (2018). Quantum generative adversarial networks. Physical Review A, 98(1), 012324. https://doi.org/10.1103/PhysRevA.98.012324

- Kwun, G., Zhang, B., & Zhuang, Q. (2024). Mixed-state quantum denoising diffusion probabilistic model. arXiv preprint arXiv:2411.17608.

- Lloyd, S., & Weedbrook, C. (2018). Quantum generative adversarial learning. Physical Review Letters, 121(4), 040502. https://doi.org/10.1103/PhysRevLett.121.040502

- Wang, R., Wang, Y., Liu, J., & Koike-Akino, T. (2025). Quantum diffusion models for few-shot learning. Proceedings of the AAAI Conference on Artificial Intelligence, 39.

- Zhang, B., Xu, P., Chen, X., & Zhuang, Q. (2023). Generative quantum machine learning via denoising diffusion probabilistic models. arXiv preprint arXiv:2310.05866.

AI for Quantum (Inversion)

- Barta, D., Martyniuk, D., Jung, J., & Paschke, A. (2025). Leveraging diffusion models for parameterized quantum circuit generation. arXiv preprint arXiv:2505.20863.

- LLM4QPE Team. (2024). Towards LLM4QPE: Unsupervised pretraining of quantum property estimation and a benchmark. Proceedings of the International Conference on Learning Representations (ICLR).

Molecular & Hybrid Applications

- Google Quantum AI Team. (2025, October). Generative quantum advantage for classical and quantum problems. Google Research Blog.

- Huang, J. J., et. al. (2024). QVAE-Mole: The quantum VAE with spherical latent variable learning for 3-D molecule generation. Advances in Neural Information Processing Systems (NeurIPS), 37.

- SandboxAQ Team. (2024, December). SandboxAQ announces funding to drive next era of AI with large quantitative models. SandboxAQ Press.

- Smith, A., & Guven, E. (2025). Bridging quantum and classical computing in drug design: Architecture principles for improved molecule generation. arXiv preprint arXiv:2506.01177.

Physics & Niche QGANs / Critiques

- Bravo-Prieto, C., Baglio, J., Cè, M., Francis, A., Grabowska, D. M., & Carrazza, S. (2021). Style-based quantum generative adversarial networks for Monte Carlo events. arXiv preprint arXiv:2110.06933.

- Kerenidis, I., & Sanches, F. (2024). QC Ware Forge: Accelerating quantum machine learning with data loaders. QC Ware Blog.

- Schuld, M. (2024). Inference from interference: Hidden subgroups as a learning problem. arXiv preprint arXiv:2412.01234.

Disclaimer: The views expressed in this article are personal and do not necessarily reflect the official policy or position of any agency or organization. AI assistance was utilized in the research, drafting, and image generation conceptualization for this article. This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.

Quantum Generative AI in 2025: From Media to Molecules was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.