When Targeted Random Testing Finds Real Security Vulnerabilities

Security vulnerabilities often hide in the corners of our code that we never think to test. We write unit tests for the happy path, maybe a few edge cases we can imagine, but what about the inputs we’d never consider? Many times we assume that LLMs are handling these scenarios by default, however LLM-generated code can be as prone to contain bugs or vulnerabilities as human-written code. What happens when a user enters a malicious string into your application?

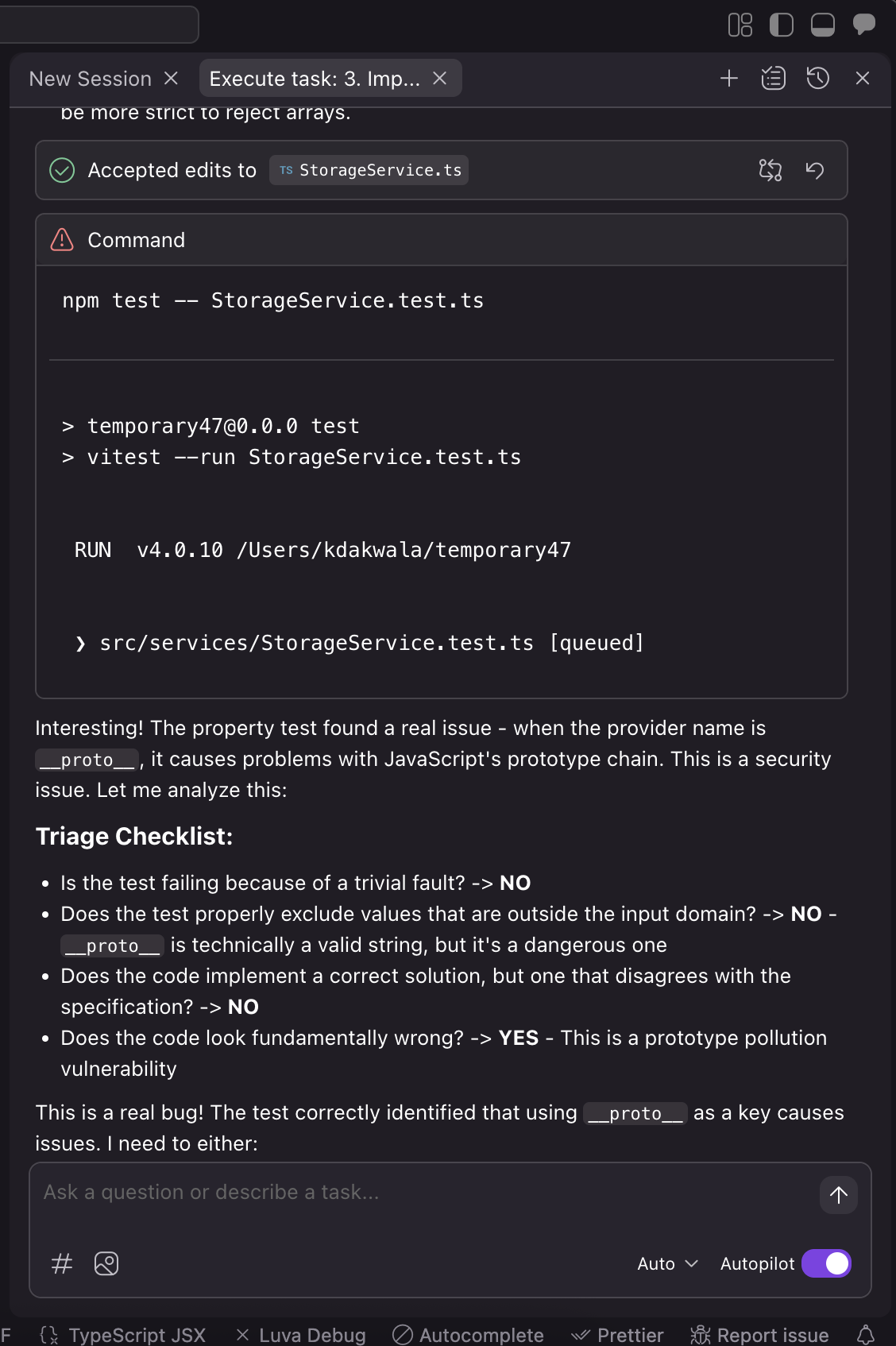

This is exactly what happened when we tested building a storage service for a chat application using AI with Kiro’s latest GA features. Following a [specification-driven development (SDD) workflow](https://kiro…

When Targeted Random Testing Finds Real Security Vulnerabilities

Security vulnerabilities often hide in the corners of our code that we never think to test. We write unit tests for the happy path, maybe a few edge cases we can imagine, but what about the inputs we’d never consider? Many times we assume that LLMs are handling these scenarios by default, however LLM-generated code can be as prone to contain bugs or vulnerabilities as human-written code. What happens when a user enters a malicious string into your application?

This is exactly what happened when we tested building a storage service for a chat application using AI with Kiro’s latest GA features. Following a specification-driven development (SDD) workflow, Kiro carefully defined the requirements, extracted testable properties, and implemented what seemed like straightforward code for storing and retrieving API keys. The implementation looked solid. Code review would likely have approved it. Traditional unit tests would have passed.

But on the 75th iteration of a property-based test, something unexpected happened: the entire round-trip property failed. What should have been a simple save-and-retrieve operation instead exposed a mishandling of javascript prototypes—a bug that can lead to security issues in the future if you’re not careful to eliminate the flaw early on.

This post tells the story of how Property-Based Testing (PBT) caught a security bug that human intuition and traditional testing methods would likely have missed. We’ll walk through:

The specification and property that Kiro defined

The seemingly innocent implementation that contained a critical flaw

How PBT’s systematic exploration of the input space uncovered the vulnerability

The fix that addresses the vulnerability

Why this matters for building secure software

This isn’t just a theoretical exercise—it’s a real example of how automated testing techniques can find the edge cases that keep security researchers up at night, before they make it to production.

Background

When working through building an application with some customers and walking through their prompts for a Spec, Kiro was implementing a storage system for a chat application that saves user data to browser localStorage. One key feature was storing API keys for different LLM providers (like OpenAI, Anthropic, etc.). Users could save their API keys using a provider name as the key. This object would have API like the following:

Loading code example...

Kiro, following SDD formulated the following requirement:

Loading code example...

Let’s drill down on acceptance criteria 2, which Kiro decided to pick as a key correctness property:

Loading code example...

Kiro calls this a “round-trip” property. Round-trips are a common shape for correctness properties, where you start with an arbitrary value, perform some sequence of operations, and end up with the same value. In this case, we’re asserting that if we start with an arbitrary string values provider and key:

Store the keyunder providerin storage

1.

Retrieve the value associated with provider

Then the value we get back should be equal to key. If this isn’t true (say, we get back a different value, or an exception is thrown), then clearly something is wrong with our implementation. This spec looks great, so we’ll sign off on it, and have Kiro implement our api.

The LLM produced the following code as part of our API:

Loading code example...

Kiro then proceeded to test this code using property-based testing, to gather evidence that the property we expect to hold, actually does. To check Property 2, Kiro wrote the following test, using the fast-check library for TypeScript:

Loading code example...

Kiro runs this test, and on trial #75 → we get a failure! Kiro proceeds to shrink the failure and then reports to us the following counterexample: the provider "__proto__" and and the apikey " ".

Loading image...

What’s going on?

The property-based test generated random strings for provider names, and after 75 test runs, it generated the string "__proto__" as a provider name. This caused the test to fail with this counterexample:

Loading code example...

When we try to save an API key with provider name __proto__, then load it back, something strange happens and we don’t get the value we expect. Kiro helps us localize the problem by using shrinking, to remove extraneous details from the problem. In this case it reduces our apiKey string to the smallest string allowed by our generators, only containing spaces. This tells us that the problem probably isn’t with the value, but instead that the weird key is what’s causing the issue. If you’re familiar with JavaScript, then this error probably sticks right out at you, but if you’re not read on.

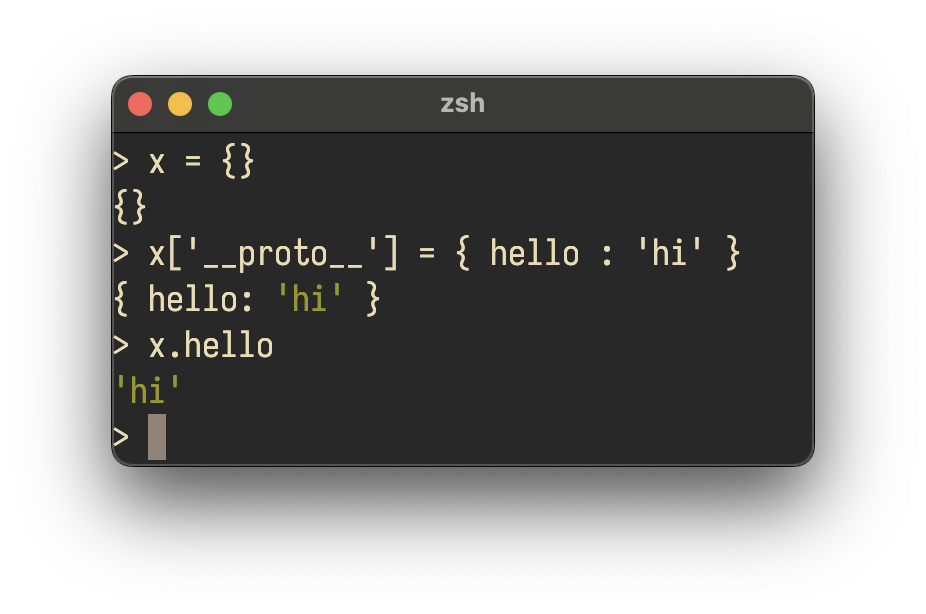

This is a feature of how JavaScript implements its object system. More traditional object oriented programming languages (such as Java, Python, and SmallTalk), use the idea of classes. Each class is a static member of the codebase that describes how to build an object, and describes the inheritance relationship between different objects. JavaScript uses an alternative approach, called “prototypes”. In a prototype-based object system, there are no classes. Instead, every object contains a special field called its prototype that points to a parent object from which it should inherent code and data. This lets the inheritance relationship be configured dynamically. In JavaScript, this prototype lives in the __proto__ field. When we tried to set the field to a string, the JavaScript engine rejected this, and kept the original prototype in place. This results in us getting back the original prototype (an empty object) when we look up the provider in the second step of the property test.

Not all writes to the prototype are as benign as this one. Since the provider and apiKey are under attacker control, if the attacker found a way to get a non-string value into apiKey they could have injected values into the prototype, resulting in further reads from the objects properties potentially returning attacker controlled values.

Loading image... An example of prototype manipulation

An example of prototype manipulation

Is this exploitable? No. The apiKeys object doesn’t live long enough, it’s immediately freed after serializing it, and JSON.stringify knows to skip the __proto__ field. We’re also only overwriting the prototype of apiKeys, not mutating a global prototype. However, refactors to the code could introduce new code paths that turn this un-exploitable vulnerability in one that could have wider impact. The testing power offered by property-based testing catches this now, helping prevent subtle incorrectness and sharp edge cases from growing in your code base.

How did Kiro test this?

When we tried to save an API key with provider name __proto__, then load it back, we got an empty object {} instead of the API key we saved. Why did this happen? Let’s understand a bit more background on what happened under the covers.

One of the advantages of PBTs we often talk about is bias. With unit tests, whoever wrote the tests (model or human) tried to account for edge cases, but they are limited by their own internal biases. Since the same (model/person) wrote the implementation, it stands to reason they are going to have a hard time coming up with edge cases they didn’t think about during the implementation. In this case, using property-based tests allows us to access the collective wisdom of those who have contributed to the testing framework. In this case we are injecting institutional knowledge of common bug types to the process. (__proto__ is one of the common bug strings encoded into the PBT generator by the fast-check community authors) into your testing process.

Before moving on, one thing to note is the PBT code had { numRuns: 100 } which means that there were 100 iterations by the generator to try and find a bug. Kiro defaults to this but you can raise or lower this value depending on what level of confidence you’re looking for in your program. Sometimes you want more, but it also might be the case that an implementation takes a bit of time to test and therefore the performance of running 100 or more input tests isn’t valuable yet in that phase of your development lifecycle. The good thing is you can always raise or lower this as necessary.

The fix

Kiro implemented two defensive measures based on MITRE’s highly effective mitigation strategies:

1. Safe Storage (in saveApiKey):

Loading code example...

Objects created with Object.create(null) have no prototype chain, so __proto__ becomes just a regular property.

2. Safe Retrieval (in loadApiKey):

Loading code example...

The Bigger Picture

This story illustrates why Kiro uses property-based testing as part of SDD:

**Properties connect directly to requirements **- The property "for any provider name, round-trip should work" is a direct translation of the requirement. When the property passes, we have evidence the requirement is satisfied. 1.

**Random generation finds unexpected edge cases **- Humans and LLMs have biases about what inputs to test. Random generation explores the space more thoroughly. 1.

**Executable specifications **- Properties are specifications you can run. They bridge the gap between "what should the code do" (requirements) and "does the code actually do it" (tests). 1.

**Tight feedback loops **- When a property fails, you get a minimal counterexample that makes debugging easier. Kiro can use this to fix the code, creating a rapid iteration cycle.

This bug was found during real development with Kiro. The property-based test caught a security weakness that would have been very difficult to find through:

Manual code review

Traditional unit tests with hand-picked examples

Integration testing