If you’ve shipped an LLM project into production before, here’s a scenario that might sound familiar.

One of the major AI labs ships a new flagship model. The release notes promise improvements across the board. The benchmark results look better. You run a few test queries with the new model in your project and the responses seem good. So you make the switch and push to prod.

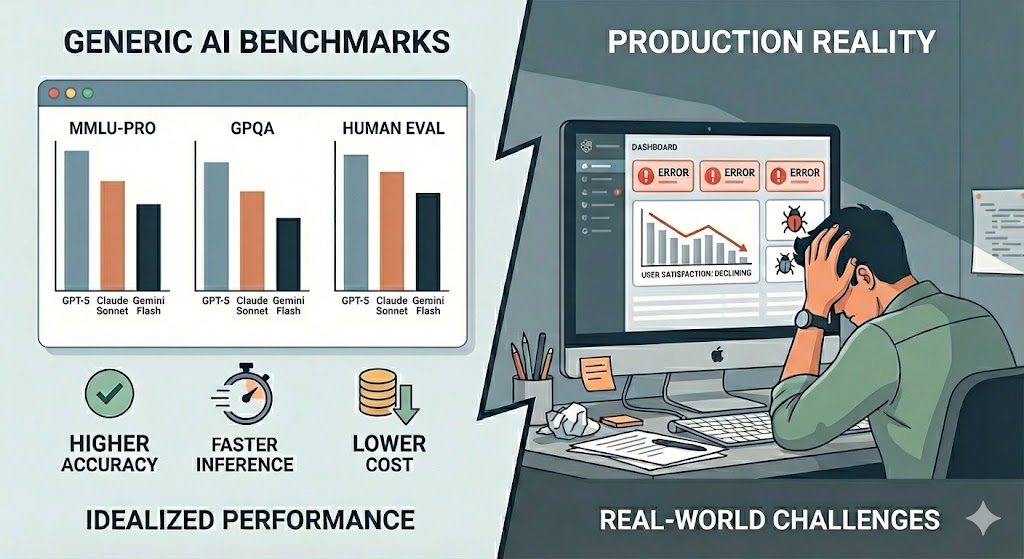

Then a week later, you’re debugging why your production application is behaving worse than before. Users are complaining. Bug reports are piling up. The model scores higher on benchmarks, but it’s performing worse on what you actually need it to do.

This happens because generic benchmarks measure breadth across many tasks. Your application needs depth on one specific task. The only way to know i…

If you’ve shipped an LLM project into production before, here’s a scenario that might sound familiar.

One of the major AI labs ships a new flagship model. The release notes promise improvements across the board. The benchmark results look better. You run a few test queries with the new model in your project and the responses seem good. So you make the switch and push to prod.

Then a week later, you’re debugging why your production application is behaving worse than before. Users are complaining. Bug reports are piling up. The model scores higher on benchmarks, but it’s performing worse on what you actually need it to do.

This happens because generic benchmarks measure breadth across many tasks. Your application needs depth on one specific task. The only way to know if a model will work for you is to evaluate it on your data. This is why custom evals matter for production LLMs.

Let me show you what that looked like on a speech recognition project where custom evals made all the difference.

Generic benchmarks show improvement, but production applications can still perform worse on your specific use case.

The Problem We Faced

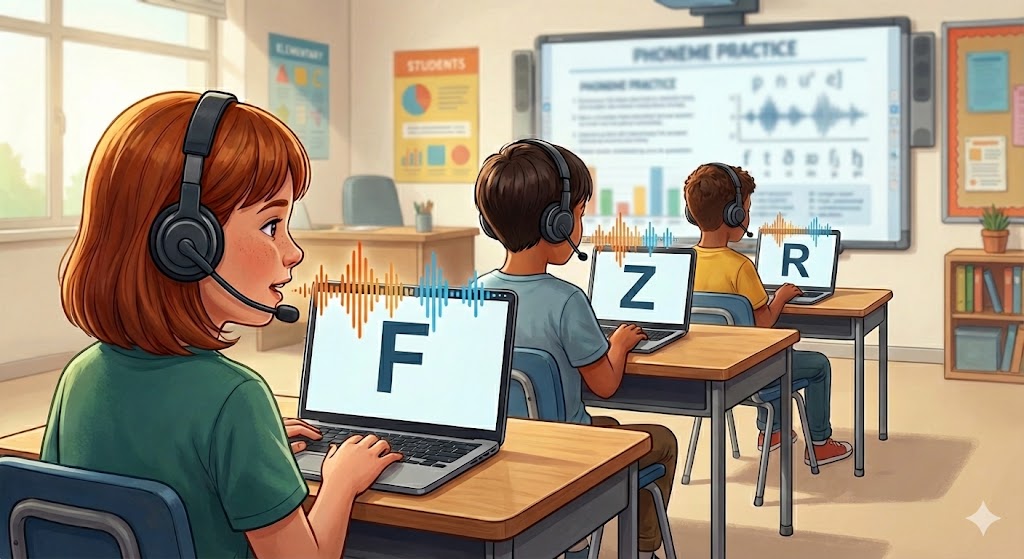

I worked on an education technology project for early elementary students learning to speak phonemes and letter names correctly. Think of it as helping kids master the fundamental building blocks of speaking and reading.

We built the initial app using OpenAI’s audio model. It worked reasonably well, but we had a major blind spot. We had no idea where it was actually failing. Some sounds were consistently misclassified, but we didn’t know which ones or why. Without that visibility, we couldn’t improve the product or make informed decisions about switching models.

The solution wasn’t complicated in theory, but it required real work. We collected over 500 audio traces from actual students speaking in real classrooms. These weren’t clean studio recordings. This was chaotic classroom audio with background noise, kids talking over each other, and all the messiness of the real world. We labeled each recording with whether the model’s classification was correct or incorrect, plus what the actual sound was supposed to be.

Real classroom conditions with background noise and chaos. This is the messy reality where our model needed to perform.

That gave us a domain-specific eval set. Not a generic "how good is this model at speech recognition" benchmark, but a specific answer to "how good is this model at recognizing the phonemes that matter for early readers in real classroom conditions."

This same approach works for any domain-specific LLM application. Document extraction, customer support, code generation, whatever. The principle is the same. Evaluate on your actual use case with your actual data.

What the Custom Eval Revealed

Avoided a Costly Migration

When a new OpenAI audio model came out with claims of improvements, we were ready to jump on it. Then we ran it through our custom eval. The results were all over the place. Better on some phonemes, worse on others. The overall improvement wasn’t worth the migration cost and risk of introducing new failure modes we’d have to debug.

This is the trap of trusting general improvement claims. A model can be better in some ways but perform worse on what you actually need. Whether you’re evaluating GPT-5 versus Claude Opus or comparing embedding models, custom evals tell you what generic claims can’t.

Found Where the Real Problems Were

Our eval revealed specific patterns. Nasal sounds like /m/ and /n/ were consistently confused because they sound acoustically similar. Plosive consonants like /k/ and /p/ got filtered out by noise-reducing classroom microphones that thought they were just background noise.

Knowing this let us focus our improvement efforts. We weren’t randomly trying different prompts or model settings hoping something would work better. We knew exactly which phonemes needed attention and could measure whether our changes actually helped. For the plosive issue, the fix wasn’t changing the model at all. It was upgrading to better headsets for the students.

Every domain has these edge cases that generic testing misses. The only way to find them is to look at your own data.

Shipped Updates Faster

Once we had the eval in place, we could quickly test new approaches. Trying a different prompt structure? Run it through the eval. Adjusting parameters? Test it against the eval. We went from "try it and see how it feels" to quantitative comparison.

That changed our iteration cycle from days to hours. No more guessing whether a change actually made things better or just felt better in the moment.

Deployed with Confidence

The eval gave us a clear bar for quality. We knew when accuracy was good enough for real students using the app. We could monitor whether performance degraded over time as OpenAI updated their models or we made changes to our pipeline.

We weren’t flying blind anymore, which matters when you’re building something that affects how kids learn to read.

Why This Matters

This pattern applies to any production LLM application. Generic improvement claims are built to make models sound better broadly. But production needs specificity. You need to know if a model works for your particular use case with your particular data in your particular conditions.

Building domain-specific evals takes time. For us, it meant collecting 500+ audio samples, labeling them correctly, and setting up the infrastructure to run new models and prompts through the eval consistently. That’s real work.

But it pays for itself immediately. We avoided a bad migration that would have cost us debugging time and potentially degraded the product. We found and fixed the actual problems instead of guessing. We shipped improvements faster because we could measure them objectively.

You can’t know if a model works for your use case without evaluating it on your data. The improvement claims won’t tell you. Only your own eval will.

Every production application needs this kind of evaluation infrastructure. Building this infrastructure is real work, which is why we’re focused on making it easier at Goodeye Labs. If you’re facing similar challenges figuring out whether your LLM actually works the way you need it to, check out what we’re building or get in touch.