Published on February 2, 2026 6:02 AM GMT

Think for a moment what you believe 0-Temperature LLM inference should represent. Should it represent the highest-level of confidence for each specific word it outputs? Or, perhaps, should it represent the highest level of confidence for each specific idea it is trying to communicate? For example, if an LLMs output token distribution for a response to a question is 40% “No”, 35% “Yes”, and 25% “Certainly”, should a 0-temperature AI interpreter select “No” because it is the individual token with the greatest level of confidence, or should it select “Yes” or “Certainly” because they both represent the same idea and their cumulative probability of 60% represents the greatest degree of confidence? I personally believe the latter.

…

Published on February 2, 2026 6:02 AM GMT

Think for a moment what you believe 0-Temperature LLM inference should represent. Should it represent the highest-level of confidence for each specific word it outputs? Or, perhaps, should it represent the highest level of confidence for each specific idea it is trying to communicate? For example, if an LLMs output token distribution for a response to a question is 40% “No”, 35% “Yes”, and 25% “Certainly”, should a 0-temperature AI interpreter select “No” because it is the individual token with the greatest level of confidence, or should it select “Yes” or “Certainly” because they both represent the same idea and their cumulative probability of 60% represents the greatest degree of confidence? I personally believe the latter.

Standard LLM interpreters use greedy temperature which depend only on the individual output probabilities given by an AI. As the temperature approaches 0, all of the tokens with the greatest probability get boosted and all the tokens with the lowest probability get left out. In most cases, this is fine and the computational efficiency of this approach to temperature application justified. However, in scenarios as above where the modal token has less than 50% probability, we can’t guarantee that the modal token represents the “median” semantic output. As such, greedy temperature is susceptible to vote-splitting whenever two or more output tokens both represent the idea that the model is most confident in, causing the interpreter to output a response that often does not represent what the LLMs would communicate most of the time if it were to be repetitively called with standard temperature.

Semantic Temperature application works to resolve this by rewriting the temperature script to identify the “median” semantic intent behind what the model was communicating and boosting the probabilities of tokens that closely represent this intent as the temperature approaches 0. This can be done by using latent-space projections of prompts and outputs to perform PC1 reduction, assuming that any disagreement in the model’s outputs can be modelled as some form of polarity (like “positive” vs “negative”, “left wing” vs “right wing”, etc). My approaches utilises a smaller LLM to plot the possibilities in latent space as, in theory, it requires far less effort to identify whether two statements are similar or different than it requires to actually generate these statements.

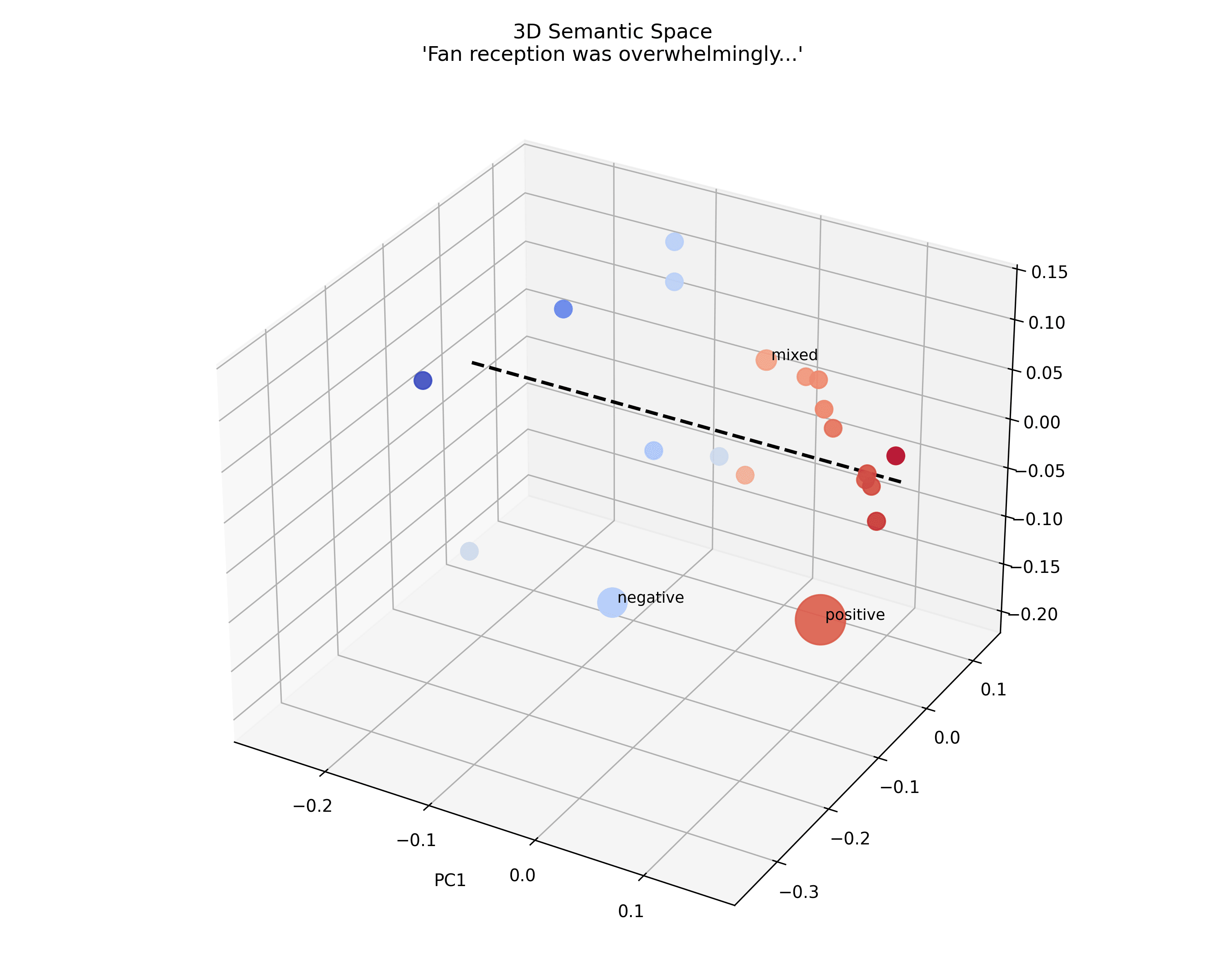

Figure 1: A 3D projection of how the latent space of continuations to “The final season of the long-running show deviated significantly from the source material. Fan reception was overwhelmingly” get organised into polarised into “positive” and “negative” sentiments through PC1 reduction

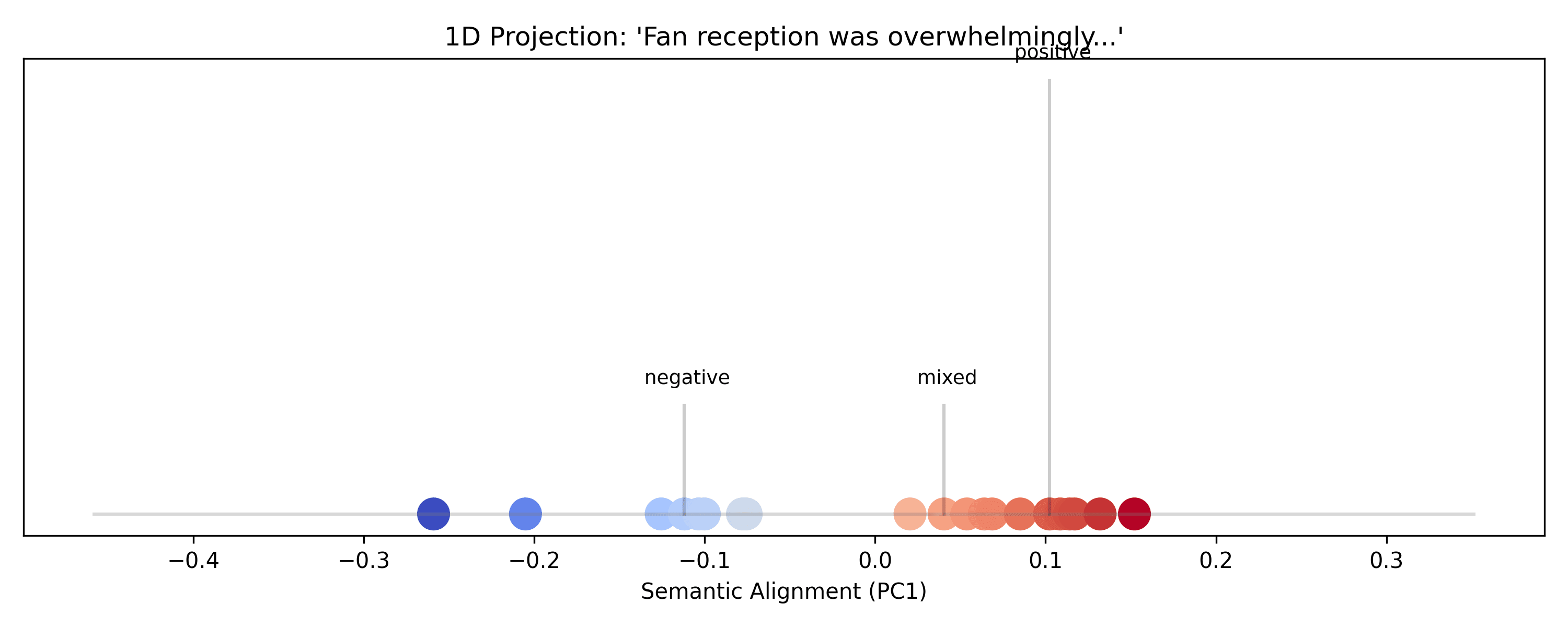

Figure 2: The ordering of the PC1-reduced latent positions of continuations of “The final season of the long-running show deviated significantly from the source material. Fan reception was overwhelmingly” after projected onto the PC1-reduced space

Note how in figure 2, PC1-reduction successfully identifies the polarity between an overwhelmingly positive fan-response and an overwhelmingly negative fan-response, and identifies that an overwhelmingly mixed fan-response would exist between these two continuations.

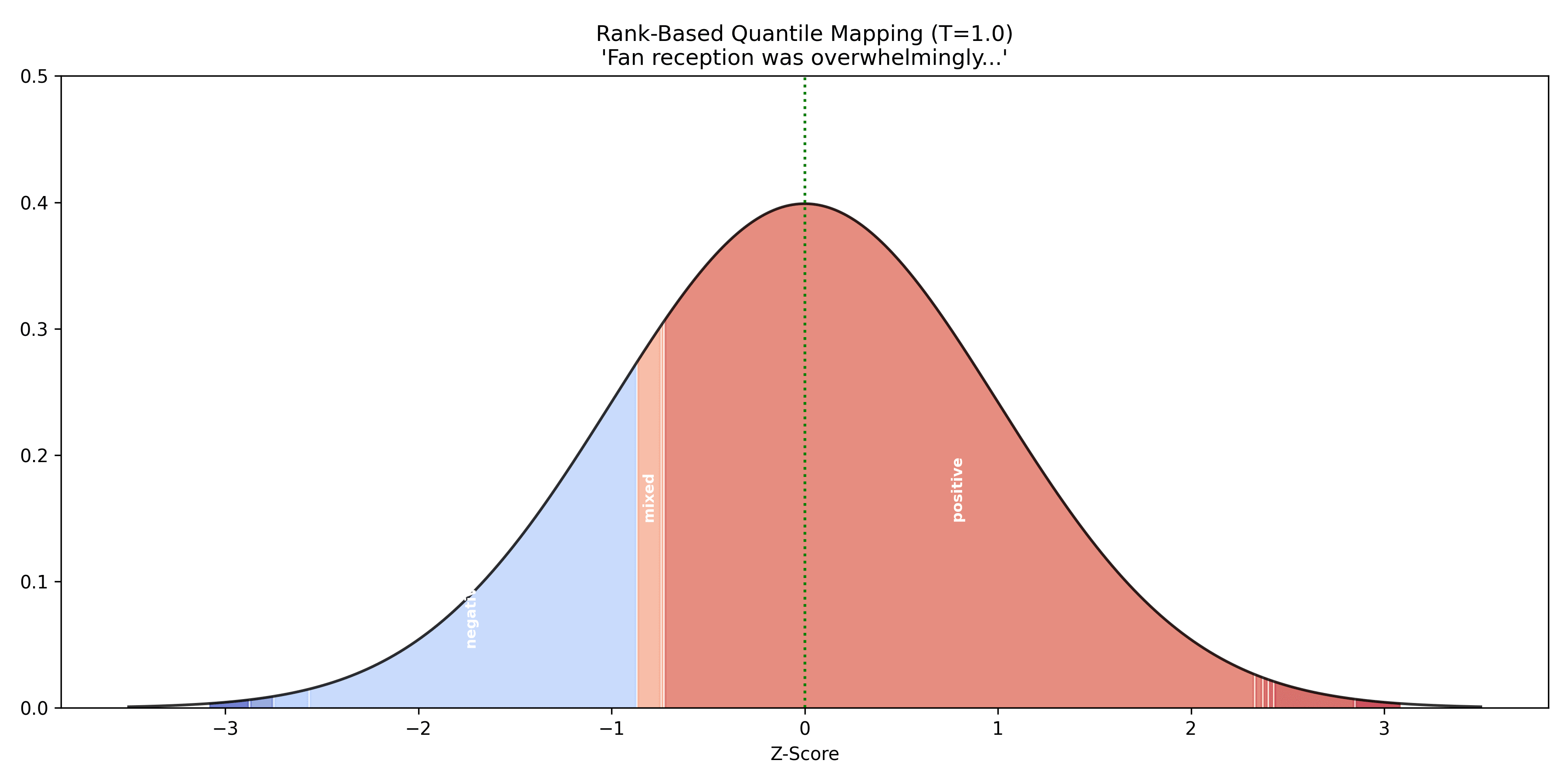

Once PC1 reduction is applied, we use the ordering of tokens along this PC1-reduced line and the initial output-probabilities for each of these tokens to map each token to “bins” along a normal distribution. Each bin is ordered according to the ordering of the PC1-reduced latent positions and the area under the normal distribution for each bin corresponds to the output probability for that token given by the initial LLM.

Figure 3: Output tokens of an LLM continuation of “The final season of the long-running show deviated significantly from the source material. Fan reception was overwhelmingly” after being ordered using PC1 reduction and mapped to a standard normal distribution using the output probabilities given by the LLM.

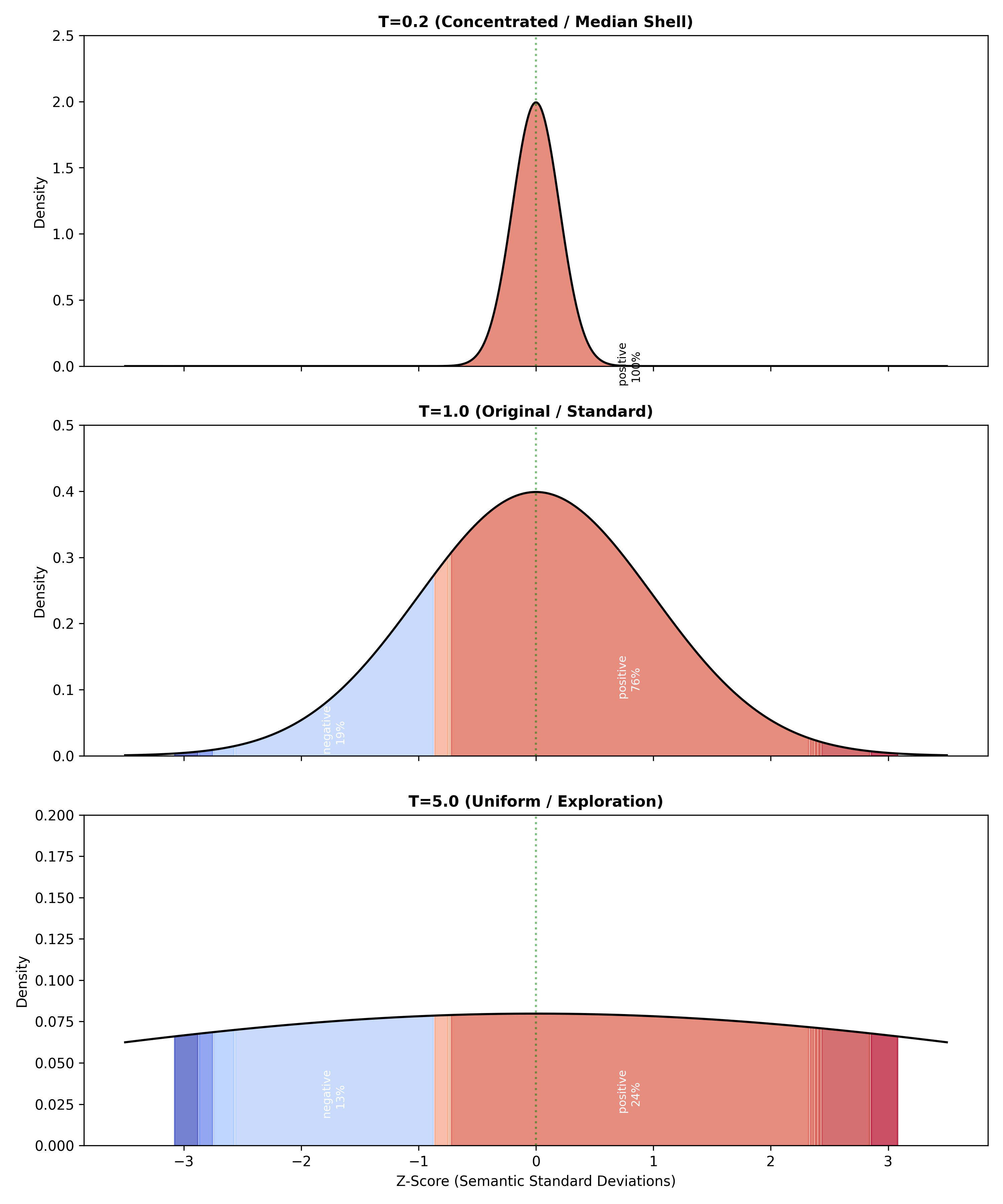

Now that the placement of each bin has been chosen so that they are ordered according to some semantic polarity and spaced according to the output probabilities of each model, we can achieve the desired behaviour of temperature by simply locking in the positions of each bin and setting the standard deviation of the normal distribution to be equal to the temperature. When temperature is set to 1, we simply have the standard normal distribution we calculated, so the output probabilities are exactly what is provided by the initial LLM, as required. As the standard deviation approaches 0, more of the area under the normal distribution is concentrated around the median semantic intent, until temperature reaches 0 and 100% of the weighting is within the bin of the token that contains the absolute median intent, as required. And, as the standard deviation approaches infinity, the probability distribution approaches a uniform distribution between the domain of the binned tokens, so that more creative ideas are given more weight without just giving equal weight to each token, allowing equal weighting of semantic ideas rather than individual tokens

Figure 4: A demonstration of how the probabilities of each token are adjusted as the temperature adjusts the standard deviation of the normal distribution defining their weights.

As this approach depends only on the output probabilities of each token given by an LLM, this interpreter can be used today to apply token selection for any LLMs in distribution, with the minor computational overhead of a far, far smaller LLM than cutting-edge models to interpret the semantic meaning of the models outputs. The capability of the interpreter is improved as look-ahead semantic sampling is applied, so that more deviation between outputs can be reviewed by the smaller LLM, but this comes at a significant overhead of having the larger model produce many outputs per token selected, so looking only 1-2 tokens ahead is recommended to maximise the interpreters effectiveness whilst minimising the computational cost of this. To try this approach on your own self-hosted LLM, the repository for this interpreter can be found here: https://github.com/brodie-eaton/Semantic-LLM-Interpreter/tree/main

Although effective, this approach is only a band-aid to apply to currently-deployed LLMs. Temperature should be applied at the model-level rather than the interpreter-level, so that the LLMs themselves can decide what their median intent is rather than depending on a smaller LLM and look-aheads to predict the median intent behind an LLM model. More research is necessary to either determine how to give temperature as an input for a neural network or to allow for safe separation of inputs and outputs from the LLM so that it does not produce any output at all until it achieves an acceptable level of confidence whilst still allowing us to review its thought process for alignment validation.

Discuss