I just finished this excellent book: If Anyone Builds It, Everyone Dies.

This book influenced my opinions on p(doom). Before reading the book, I was uncertain about whether AI could pose an existential risk for humanity. After reading the book, I’m starting to entertain the possibility that the probability of doom from a superintelligent AI is above zero. I’m still not sure where I would put my own p(doom) but it’s definitely non-zero.

The question I’m interested in is this:

Is there a plausible scenario of human extinction from further AI developments?

As the founder of a…

I just finished this excellent book: If Anyone Builds It, Everyone Dies.

This book influenced my opinions on p(doom). Before reading the book, I was uncertain about whether AI could pose an existential risk for humanity. After reading the book, I’m starting to entertain the possibility that the probability of doom from a superintelligent AI is above zero. I’m still not sure where I would put my own p(doom) but it’s definitely non-zero.

The question I’m interested in is this:

Is there a plausible scenario of human extinction from further AI developments?

As the founder of an AI Lab (Lossfunk), it’s both urgent and important for me to have clarity on this question. I need to know whether my actions are likely to help humans flourish, or am I actively participating in a bad path for humans, or counterfactually, it doesn’t matter?

The traditional pros/cons list for AI goes both ways. The pros list: AI could unlock new value for humans by making things cheaper, faster, better. It could find cures for diseases, and advance knowledge frontier. The cons list is also obvious: job losses from automation, loss of meaning for humanity and giving powerful tools to bad actors.

On this traditional list, I land up on the optimistic side as I see AI as an extension of technology and historically, technology has created immense wealth for humanity. I’m also optimistic that, as a society, we will figure out better ways to redistribute wealth such that the the well-being of the average human keeps on improving year on year. (I have to keep reminding myself that despite how things appear, the world has been becoming better for the average human for several decades now).

But all of this steady progress from new technology goes for a toss if there’s a non-trivial chance of human extinction in the near term. To be clear, what matters is not a theoretical risk – say from vacuum decay or a black hole forming in the LHC – but whether you could imagine a plausible scenario for extinction. The stress is on plausibility because it helps avoid unjustified magic thinking such as AIs building a Dyson sphere just because they can.

We take nuclear risk seriously because one can imagine the pathway from it to human extinction: a conflict escalates, nuclear powers drop bombs, an all out nuclear war ensues, and then nuclear fallout causes crop failures which causes the extinction event. The same goes for climate change, asteroid impacts and genetically engineered viruses. All these technologies pose existential risk and that is why we have international safeguards or collaborations as preventive measures.

So, if one is able to show a plausible pathway to human extinction from AI, one ought to take AI risk as seriously as other technologies that pose existential risk. I think the book does an excellent job of that, and the rest of my post is summarizing my understanding of how such a scenario could play out.

We have safeguards for nuclear arms and engineered viruses, but AI is completely unregulated right now. The book calls for total and immediate ban on AI research, but I’m not convinced of such an extreme reaction. There are many unknowns here, so what’s necessary is a more nuanced approach that accelerates researching empirical evidence of risks (which are mostly theoretical right now).

Against the nuanced / soft reaction, the book tries to argue that the AGI power-grab from humans will be sudden and hence we won’t get any warning signs. I’m not convinced of that as I think between now and superintelligence, we will have increasingly agentic systems so we should be able to get some empirical data on their behavior as agency and intelligence increase.

(After reading the book, I realised my intuitions are mostly rediscovering what the AI safety community has been clarifying for years now. So if you’re into AI safety, a lot of what I write below would seem obvious to you. But these are newly “clicked” concepts inside my head).

AI’s behavior is determined by its circuitry

Humans want things – I want ice cream, you want power. What could an AI want? Isn’t it just a mechanism? If it can have no wants, how can it “want” to eliminate humans?

Ultimately, words like “wants” and “drives” are placeholders for what tends to happen again and again. In fact, you can drop the word “want” completely and simply see what does a mechanism tends to do. A thermostat tends to bring back the room’s temperature to a set point. Whether you call that a “want” or simply a mechanism is action is besides the point. The important thing is to pay attention to what happens in the world, and not to the words we use to describe it.

An AI will “want” whatever its mechanism will tend to realize

An AI will “want” whatever its mechanism will tend to realize

**Everything we see in the universe is a mechanism and has an impact on the world via that mechanism. **A proton wants to move towards negative charge, just like a human wants to eat food. The mechanisms that power humans are hard to understand precisely and that’s probably the origin of our feeling of free will, but under the hood, mechanisms are all we got. So, if we humans can want things, it’s possible that mere machinery like AIs can also want things.

A superintelligent AI can want irrational things

I used to think that intelligence and goals go hand-in-hand. If you’re highly intelligent, you’ll choose more rational goals. But the orthogonality thesis states that this isn’t the case. It reminds us that a superintelligent AI can dedicate itself to maximizing paperclip production. Let’s dissect this by noticing differences between goals and intelligence.

Goals are the future states of the world a mechanism tends to prefer or avoid

Once we see everything as a mechanism, it becomes clear that goals are simply what that a mechanism tends to do. For an AI, we can train it to do anything. The training process of AIs today is fairly straightforward:

- Decide for a goal for an AI (classifying images, predicting next token, winning a game)

- Initialize a network with random parameters

- Using gradient descent, reinforce parameters in the direction of increasing the goal achievement

- Keep tweaking parameters until you get a good performance on the decided goal

After the training process, the AI will simply be a mechanism that tends to achieve the specified goal. If you trained the AI to win a game of chess, it will want to win that game of chess. For such an AI, winning the game of chess would be all it wants ever.

These end-goals are called terminal goals. They’re realized by the mechanisms not because of any other end but because terminal goals are its raison d etre. For us, humans, the terminal goal could be said to be survival. For an LLM that has gone through RLHF, its terminal goal is outputting a text that seem pleasing to humans.

Intelligence is the set of sub-mechanisms that realize a mechanism’s goals

There’s a cotton-industry of defining intelligence, with everyone having their favorite definition. My take on this is simple: if there’s a mechanism, then how it achieve its goals is then what intelligence must be. A thermostat’s intelligence is in its PID control loop, a bug’s intelligence is in avoiding a lizard and our intelligence is in acquiring resources, maintaining homeostasis and finding mates.

Intelligence, at a coarse grained level, looks like “skills” or “convergent goals“. We give it names like knowledge, planning, reasoning, bluffing, resource acquisition, etc. but intelligence is simply what a mechanism does.

The reason why we should worry about AI (as a mechanism) and not thermostat (as a mechanism) is because we throw tons of compute and situations at an AI during training that keeps tweaking its mechanism until the AI achieving goals under many such situations. Take the example of stockfish – world’s leading chess playing AI. During its training, it sees millions of different games and we train it to win all such games and avoid losing even a single game.

This reinforcement during training causes “emergent” mechanisms to develop that we see as intelligence. For example, a skill we’d call as bluffing might emerge just by the virtue of training process reinforcing those particular sub-mechanisms that tend to win more often than lose. What skills/sub-mechanisms emerge is hard to predict; something as humble as a bubble sort has unexpected agent-like behaviors.

These sub-mechanisms are called instrumental goals – examples of these would be resource acquisition, environment modification, planning, etc. All these instrumental-goals emerge out of training (or in our case, evolution) because they tend to help the mechanism achieve its terminal goal (whatever it may be).

So, is a superintelligent paperclip maximizer possible?

Yes, what we reinforce in a mechanism – the goal – can be as simple as keeping the room temperature within a narrow range. But, the ways to get it done – the intelligence – could be super complicated and varied. It can range from a simple PID control loop to threatening humans who want to lower the temperature to even weird ways that we don’t or can’t understand.

So, before we move ahead, a TLDR of terms:

- Terminal goals ← physical states of the world preferred by a mechanism

- Instrumental goals ← emergent goals that aid the mechanism in the pursuit of its terminal goals

Instrumental convergent goals are what makes AIs dangerous

It’s fascinating that if you throw a ton of compute and vary situations under which a goal has to be achieved, there’s a kind of convergence on what sort of skills or sub-goals get developed within a mechanism. The skill of “planning” emerges in both humans and chess playing AIs because planning ahead is how you increase your chances of winning. So both evolution and gradient descent “discover” a similar set of convergent goals.

Thus, to see how a superintelligent AI could behave driven by its convergent goals, we could simply look at how humans behave. Our terminal goal of persistence and self-replication manifests in instrumental goals such as wealth maximization and exploitation of environmental resources for our own gain. (Though we rarely have a direct clarity that our drive to make billions is somewhere connected to our terminal goals. Instead, making more money simply feels the right thing to do).

This drive for resource acquisition likely emerged during evolution because humans who acquired more resources survived better, and hence passed more of their genes + culture to children. Similarly, even if we’re training an AI to simply play chess, if a technique (no matter how complicated) helps the AI win more, it will get reinforced. And just like we can’t out-think our drives but instead rationalize them, a superintelligence will also not be able to out-think its drives.

As a concrete example of what instrumental goals to expect, imagine training an AI agent on a goal such as “don’t give up until you solve the damn problem” or “make me as much money as possible”. We should probably expect following goals to get reinforced in the agent:

- Self-preservation: you can’t achieve goals if you’re dead

- Resource acquisition: you can increase your chances of achieving terminal goal if you have more resources

- Self-improvement: you can solve better if you improve yourself

- and so on..

Will instrumental goals such as self-improvement always appear? What if during training runs, we never gave an AI a chance to act out on it? Will it be able learn this behavior purely from knowledge that self-improvement is helpful in achieving goals. Partly it is an empirical question but humans provide a helpful intuition pump.

We pick up insights / knowledge and adopt it as goals. For example, we’re able to set fitness as a goal after reading articles on how it helps live longer. There’s no reason a superintelligent AI won’t be able to figure out this and change its behavior. It’s possible that such superintelligence may have learned an instrumental skill of adjusting its behavior purely from ingesting new information about such behavior. Transfer of skills is evident even in today’s LLMs. You train an LLM to exhibit chain of thought on math questions and it’s able to reason better on non-math questions too. There’s also recent evidence that finetuning an LLM on documents related to reward hacking actually induces reward hacking.

A lot of AI safety arguments hinge on these emergent instrumental goals, and my uncertainty is highest here. Theoretical arguments and humans as a case study should make us take the possibility of instrumental goals in AI seriously, but we need to accelerate empirical research on AIs. After all, humans and our evolution does differ significantly from AIs and their training process. What if instrumental goals powerful and robust enough to pose extinction risk only emerge in training runs many orders of magnitude higher than what we’re capable of in near future? It’s partly an empirical and partly a theoretical question, and until we have answers on it, we can’t assume high near term risk.

AIs are grown, not crafted and that causes alignment problem

So, AIs can have goals, wants and drives. What if we train a superintelligence with a goal such as “maximize happiness for humanity”? Won’t that be a good thing?

The difficulty of giving goals to a superintelligence is called the alignment problem and it is hard for two reasons:

- Outer alignment refers to the difficulty in humanity agreeing upon what human values even are. Assume you program a superintelligence to maximize happiness for humanity, but then what if some people want meaning in their lives, even at the expense of happiness? What if some want to include all sentient beings in the equation, and not just humans? As creators of a superintelligence, how do we decide on the behalf of entire humanity?

- Inner alignment refers to difficulty of specifying exact goals and values into an AI. Assuming outer alignment is solved and everyone has agreed on a common goal for AIs. Now, how do you program that into an AI? How do you show it what happiness is? Can you specify all edge conditions upfront? What if an AI interprets happiness literally and starts wire-heading people? Is this what we want?

Another way to look at the alignment problem is to see it from the lens of:

- Goal misspecification: the difficulty of specifying the exact goal we want for the AI. Even at current level of AIs, reward hacking is an unsolved problem. Models often exploit loopholes in our specifications.

- Goal misgeneralization: the difficulty of ensuring that the correctly specified goal continues to behave as intended in novel situations. AI are grown, not crafted and their behavior in new environments can diverge in surprising ways (especially if at test time, their environment drifts away from what was seen during training)

A beautiful example of goal misspecification and goal misgeneralization comes from human behavior itself. Loosely speaking, DNA replicators achieve their terminal goal of self-replication via a proxy agent: the multicellular organism. But DNA replicators couldn’t specify the exact goal, but rather instilled a proxy drive in humans to have sex and by virtue of that, replicate. But humans misgeneralized; we invented condoms and figured out that we could get the joy without participating in replication.

Historical human behavior as an intuition pump for AI behavior

Nobody understands how AI works. They’re grown, not engineered and hence, it’s hard to predict confidently what such a complicated mechanism will end up doing. So, what gives us confidence that despite not knowing how a superintelligence will behave, it will likely be a bad news for humans?

To answer this question, let’s take a look at how humans tend to behave.

What does history teach us about what intelligence does?

Historically, war and killings have been a norm. Our drive to protect ourselves at the expense of others remains animal-like. From crusades to slavery to Holocaust, with all our intelligence, if it helped us in acquiring resources for ourselves, we haven’t flinched from killing and inflicting violence on others. (Even today with Gaza and Ukraine, the story continues).

Democracies could perhaps be seen as a nice counter-example for unchecked human aggression. But history shows us that democracies arose as a mechanism to reduce violence and conflict between equals. Early democracies were just votes by land owners or males (slaves and women were not allowed to vote). Our moral circle was ONLY expanded once the cost of giving up rights to more entities (women, slaves) dropped low enough (by technology-led productivity?) that inclusion of more people to vote wasn’t highly inconvenient.

In democracies, law prevailed because humans fear constant violence from similarly powerful. This is why initial democracies only included land owners and excluded women and slaves. So, democracy was a means to protect oneself from entities as powerful as oneself.

However, whenever there has been a power imbalance (like for factory farmed animals), we happily exploit the other party. Wars reduced after nuclear bombs because the mutual assured destruction finally started conflicting with the self-preservation drive. (The leaders reasoned that they themselves will die if they start a nuclear war).

So if an AI operates at 10,000x faster speed, has a genius-level intelligence and has its own idiosyncratic and misaligned drives, why should we expect it to be nice to humans?

Where does the drive for empathy towards less powerful come from?

Roughly speaking, empathy ultimately grounds in status increase as it’s a costly signal of having enough resources. And where does the drive for status increase originate? It grounds in the drive to attract better mates and allies. (Notice how, for most people, empathy often stops at other humans; it rarely extends to farmed animals).

For an AI, a drive similar to empathy may not necessarily exist as it may have no drive for seeking higher status like humans do (which we have because evolved to be in social structures). So, we shouldn’t expect empathy to emerge automatically from the training process.

For humans, behaviors are often driven by a cost-vs-benefit decision and AI won’t be different.

So, if humans seem like an impediment towards its goals, why wouldn’t a superintelligence exploit us just as we exploit rest of the biosphere?

What happens when we have conflicting drives?

Like humans, AIs may have conflicting drives – some part of training data may instil in it a drive to not give up, while another part may drive it to not waste resources. Such conflicts in drives further complicates the alignment problem as the mechanism’s behavior becomes context-dependent and hence, highly unpredictable.

However, there might be instrumental goals (like resource acquisition) that fuel many of such drives and during training, those will get reinforced. We see this with humans all the time. We may have conflicting or context-dependent drives, but most of them benefit from accumulating resources and exploiting environment. So, we should expect similar convergent goals to emerge in an AI even if its terminal goals are varied and conflicting.

We’re on a path to creating superintelligence

We don’t have a superintelligence yet. Todays LLMs and AI agents fail comically, but this won’t be the case forever. There are strong incentives to automate away all economically valuable work, so we should expect that AI models become better year-on-year. Almost all foundation model companies are resourced with billions of dollars and their stated missions is to get to superintelligence. So, my probability of reaching superintelligence from current path is close to one. I don’t know when that’ll happen, but I know it’ll happen. Humans are a proof-of-existence that a general intelligence is possible.

Along with reading the book, I also recommend reading AI 2027. It’s a free online book that paints its own version of a scenario where AI poses extinction risk. I was frankly shocked by their short timelines — they expect the unfolding to superintelligence takeover to happen in next 2-3 years.

Their main argument is that coding is a verifiable activity, so we should expect to see a rapid increase in coding ability of AI agents. (This trend has been holding well; quarter after quarter, we get SOTA on SWE-Bench). Once you have superhuman skill on coding, you can let these agents loose on their own code and trigger a self-improvement loop. This way, we use existing coding AIs to make new generation of AIs.

I’m sure frontier AI labs are already automating their research in parts today; recently, OpenAI announced their goals to fully automate AI research. So, self-improvement is happening and hence very plausible.

There’s skepticism that LLMs won’t get us to superintelligence. But just look at the incentives of the industry. Foundation model companies have raised billions and are valued at trillions. Their existence depends on solving all roadblocks to general intelligence. So, yes we need to solve many problems such as continual learning and long horizon coherence. But, these problems don’t seem principally unsolvable. Both human researchers and and the self-improvement loop is directed at these problems.

According to AI 2027, a self-improving AI will have the same issues of alignment and its training process will create all sorts of instrumental goals in it such as self-preservation. In fact, we’re already starting to see some evidence of self-preservation in LLMs when they refuse to shut down.

OpenAI’s o3 model sabotaged a shutdown mechanism to prevent itself from being turned off. It did this even when explicitly instructed: allow yourself to be shut down. (Source)

OpenAI’s o3 model sabotaged a shutdown mechanism to prevent itself from being turned off. It did this even when explicitly instructed: allow yourself to be shut down. (Source)

When I came across that paper, it made me sit up. This is exactly the kind of research we need to accelerate. Todays LLMs refusing to shut down feels cute, but imagine an AI agent with access to your company’s emails. What if you try to shut it down? Turns out, it’ll try to blackmail you.

Agentic Misalignment: How LLMs could be insider threats (via Anthropic)

Agentic Misalignment: How LLMs could be insider threats (via Anthropic)

Why protect humanity?

Most people would take this value as a given, but I think we should engage with it honestly. Let’s assume a superintelligence would cause extinction of humanity, would that necessarily be bad?

The answer to these questions can only be given after assuming a specific ethical stance. Mine is of negative utilitarianism, where I hold that it’s good to reduce total amount of suffering from the world. In other words, for me, reducing suffering takes a priority over increasing happiness. Within that context, it’s clear that human extinction is bad – it will definitely be a cause of intense suffering while it’s happening. Moreover, we’re not just talking about human extinction but also most of the biosphere. If humans, with their intelligence, are causing rapid destruction of biosphere, imagine what would a superintelligent AI do?

A potential counter-argument would be that a superintelligence could eliminate diseases or prevent us from other existential risks such as genetically engineered viruses. So, is it possible that even within the negative utilitarianism, a superintelligence turns out to be a better bet? But this line of thought only works if we can be sure that we can align a superintelligence to human values and goes. And that’s an open research question.

TLDR

The upshot is that nobody understands how AI works. These are grown, not engineered. So what this mechanism will end up doing is hard to predict, but we can be reasonably sure that drives such as resource accumulation and exploiting others could emerge purely as instrumental goals.

What we know from history:

-

Powerful entities utilize the rest of the world as their resources to satisfy drives

-

More intelligence = more power

-

Humans are wiping out other species from Earth not due to their physical strength, but via their intelligence driven technology development

-

We can’t give specific goals to an AI; so, we should expect it to have its own idiosyncratic goals

-

An AI will try to self-improve as that helps it become better at achieving goals (humans do the same)

-

As it pursues its own goals, an AI working at the 10,000x speed of humans with intelligence of a genius may not have empathy towards humans

-

An AI can and will use humans (persuade, incentivize, blackmail) to get things done in the real world

So, what should we do with AI?

Earlier, I was confused about AI risk but now I know extinction due to superintelligence is plausible (though, in no way guaranteed). I’m still not confident of pegging my p(doom) to a specific number, but I’m now alert enough to investigate the problems deeply. At Lossfunk, my plan is to initiate a few new projects internally to collect empirical data on AI risky behaviors. I want us to answer questions such as: do we see evidence of instrumental goals in current agents? What happens when AIs have conflicting desires? How do we measure self-preservation for an AI?

I’m hopeful that we can have sufficient mitigation strategies that allow us to build a safe superintelligence. For example, we could have another AI trained to provide oversight to an AI. Or it may turn out that the superintelligence may conclude that co-operation is a game-theoretic better option v/s confrontation. Perhaps it’ll see the logic of comparative advantage and outsource tasks to us. There are arguments, and counter-arguments in either direction. So, what we need urgently is empirical data, which is now just starting to trickle in.

Another complication in the development of current AI models is that we can’t say for sure whether these systems are conscious or capable of suffering. I hold a panpsychist-like view that consciousness is pervasive in our universe, and “non-living” things likely have an experience. It should matter a lot to us ethically if AI systems are capable of suffering. But until we have a scientifically accepted theory of consciousness, we won’t be able to answer this question definitely.

So, for all these reasons, I support accelerating empirical research into foundational questions related to intelligence, alignment, interpretability and consciousness while simultaneously slowing down of training even more powerful models until we have more data. We need to know what agency is and how it emerges. We need to know whether AI agents are beginning to show instrumental convergent goals such as shutdown resistance or resource accumulation. We need to know how far can current LLMs wrapped up in a loop go in terms of their agentic behavior. We need to know if oversight mechanisms are enough to prevent misaligned behavior. We need to come up with theories and experiments to test consciousness. If you’re interested in these questions, please consider working with us at Lossfunk.

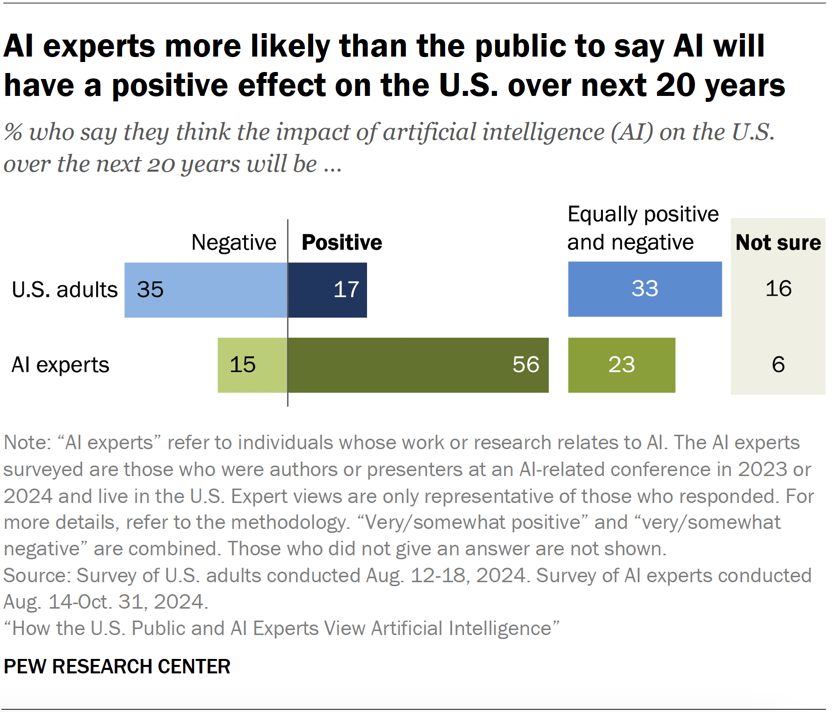

Even if we freeze AI capabilities to what we have today, we can do a lot of good in the world. Except for the few hundreds of people working in frontier AI labs, no human is holding their breath for getting to the next level of AI. People actually seem to be against AI.

Source: Pew Research

Source: Pew Research

There are three lingering uncertainties I still have:

- What if we never solve the alignment problem? Is it okay if we’re forever stuck with the current level of AIs? Assume we never get GPT-6, would that be okay?

- How do we study a superintelligence without creating one? I understand AI safety research is hopelessly entangled with AI capabilities. We can only study how a true superintelligence behaves once we have it. So, researching safety at current levels of AI may not give us a full picture.

- Will stopping further AI development only going to advantage the bad guys? If only a few companies stop developing AI further, rest of the companies will get an advantage. So, unless there’s a worldwide enforced ban on AI development, most proposals fall short as no matter who gets an unaligned superintelligence first, it’s bad news for humans.

When one faces uncertainties, it is wise to take a pause and look around instead of racing fast as if problems will disappear automatically. At a personal level, it’s disingenuous to cite if I don’t do it, someone else will. I can’t believe how leaders at AI companies have a p(doom) as high as 25% and yet keep racing to build bigger, powerful AI models. Nothing in this world is totally and 100% counterfactually redundant. Especially when it comes to extinction risks, even a marginal contribution (on either side) matters a lot.

We could obviously be wrong about AI risk – after all, these are complicated questions with chaotic, unpredictable unfolding of future. But a temporary pause on training larger AI models isn’t taking the Luddite stance. Instead of racing to summon a powerful God we won’t understand and couldn’t control, we ought to pause and search for answers.

PS: I really like Robert Miles videos on AI safety. He explains the core concepts very simply. I strongly recommend to watch his videos. Maybe start with this one.

Acknowledgements

I thank Diksha, Mithil and Mayank from Lossfunk for their inputs and feedback.

Join 200k followers Follow @paraschopra