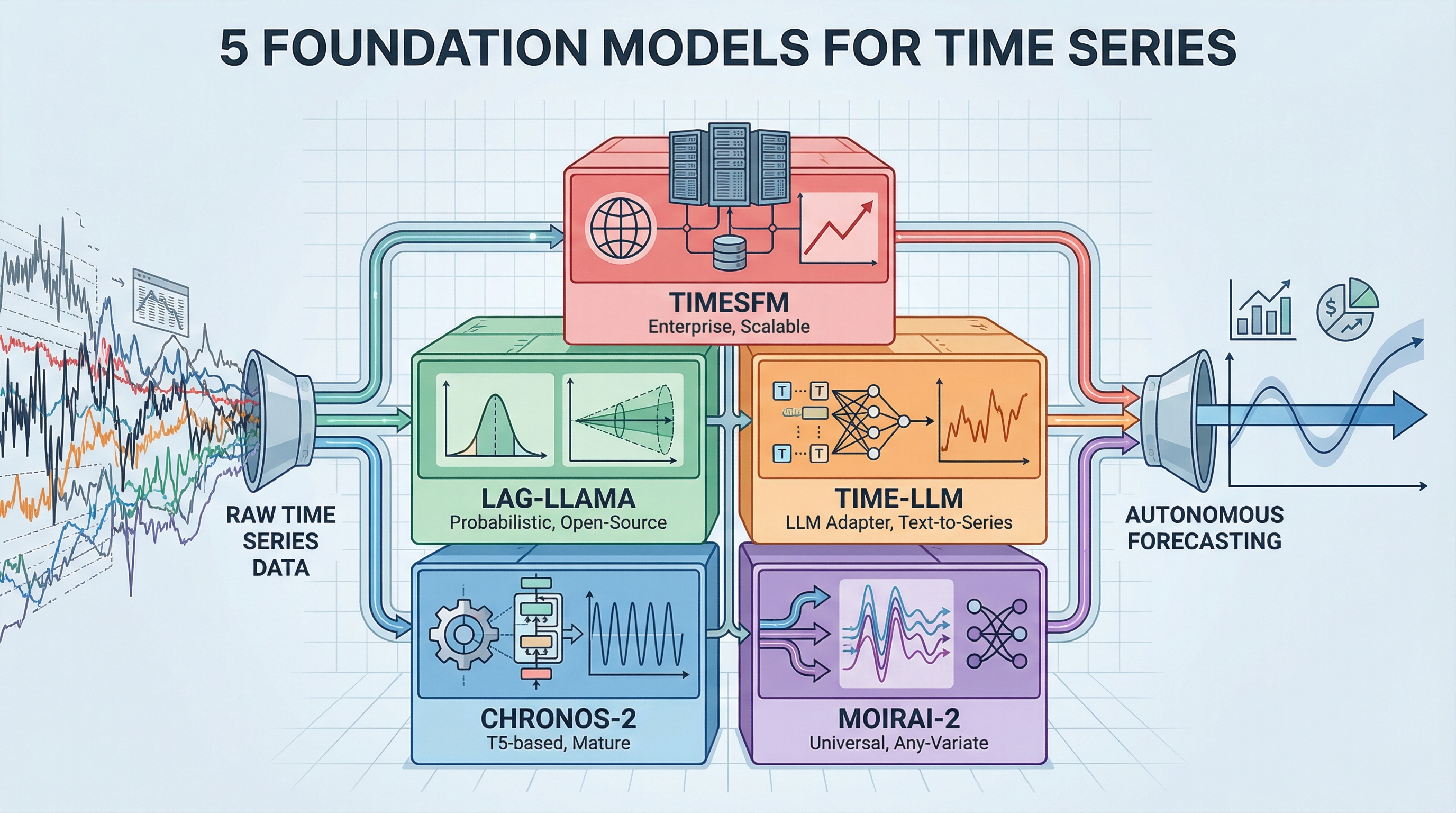

The 2026 Time Series Toolkit: 5 Foundation Models for Autonomous Forecasting Image by Author

Introduction

Most forecasting work involves building custom models for each dataset — fit an ARIMA here, tune an LSTM there, wrestle with Prophet‘s hyperparameters. Foundation models flip this around. They’re pretrained on massive amounts of time series data and can forecast new patterns without additional training, similar to how GPT can write about topics it’s never explicitly seen. This list covers the five essential foundation models you need to know for building production forecasting systems in 2026.

The shift from task-specific models to foundation model orchestration changes how teams approach forecasting. Instead of spending weeks tuning parameters and wrangling domain expertise for each new dataset, pretrained models already understand universal temporal patterns. Teams get faster deployment, better generalization across domains, and lower computational costs without extensive machine learning infrastructure.

1. Amazon Chronos-2 (The Production-Ready Foundation)

Amazon Chronos-2 is the most mature option for teams moving to foundation model forecasting. This family of pretrained transformer models, based on the T5 architecture, tokenizes time series values through scaling and quantization — treating forecasting as a language modeling task. The October 2025 release expanded capabilities to support univariate, multivariate, and covariate-informed forecasting.

The model delivers state-of-the-art zero-shot forecasting that consistently beats tuned statistical models out of the box, processing 300+ forecasts per second on a single GPU. With millions of downloads on Hugging Face and native integration with AWS tools like SageMaker and AutoGluon, Chronos-2 has the strongest documentation and community support among foundation models. The architecture comes in five sizes, from 9 million to 710 million parameters, so teams can balance performance against computational constraints. Check out the implementation on GitHub, review the technical approach in the research paper, or grab pretrained models from Hugging Face.