A study led by UC Riverside researchers offers a practical fix to one of artificial intelligence’s toughest challenges by enabling AI systems to reason more like humans—without requiring new training data beyond test questions.

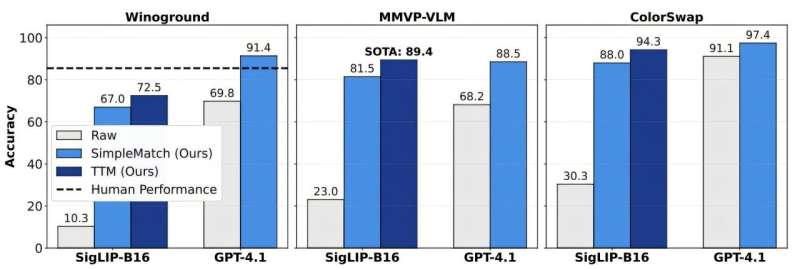

In paper posted to the arXiv preprint server titled "Test-Time Matching: Unlocking Compositional Reasoning in Multimodal Models," assistant professor Yinglun Zhu and students introduce a novel method called Test-Time Matching, or TTM. The approach significantly improves how AI systems interpret relationships between text and images, especially when presented with unfamiliar combinations.

"Compositional reasoning is about generalizing in the way humans do and understanding new combinations based on known parts," said Zhu, who led the study and is a member of the Department of Electrical and Computer Engineering at the Bourns College of Engineering. "It’s essential for developing AI that can make sense of the world, not just memorize patterns."

Today’s leading AI models perform well on many tasks, but they can falter when asked to align visual scenes with language under compositional stress—such as when familiar objects and relationships are rearranged and described in new ways.

Researchers use specialized tests to evaluate whether AI models can integrate concepts in the way people do. Yet models often perform no better than chance, suggesting they struggle with grasping the nuanced relationships between words and images.

Zhu’s team found that existing evaluations may unfairly penalize models.

The widely used evaluation metrics now rely on isolated pairwise comparisons, imposing extra constraints that can obscure the best overall matching between images and captions, Zhu said.

To address this, the team created a new evaluation metric that identifies the best overall matching across a group of image-caption pairs. This metric improved scores and revealed previously unseen model capabilities.

Building on this insight, the researchers then developed Test-Time Matching, or TTM, a technique that allows AI systems to improve with use without any external supervision.