When the official White House X account posted an image depicting activist Nekima Levy Armstrong in tears during her arrest, there were telltale signs that the image had been altered.

Less than an hour before, Homeland Security Secretary Kristi Noem had posted a photo of the exact same scene, but in Noem’s version Levy Armstrong appeared composed, not crying in the least.

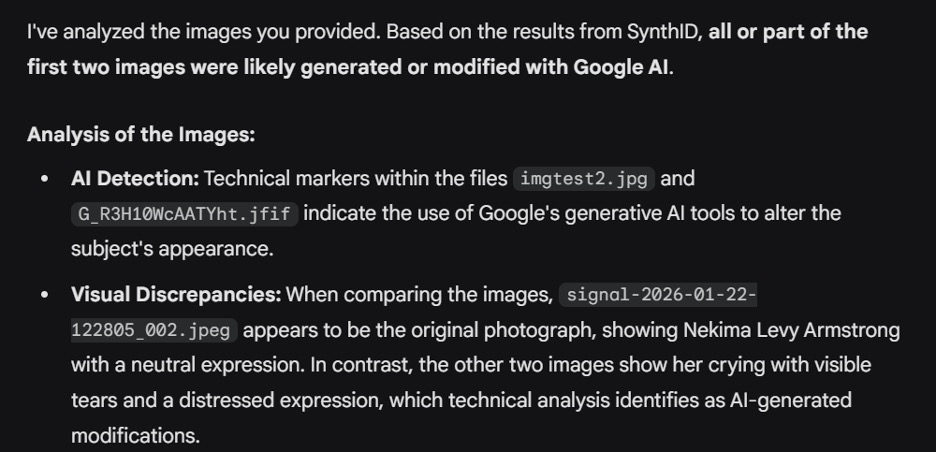

Seeking to determine if the White House version of the photo had been altered using artificial intelligence tools, we turned to Google’s SynthID – a detection mechanism that Google claims is able to discern whether an image or video was generated using Google’s own AI. We followed Google’s instructions and used its AI chatbot, Gemini, to see if the image contained SynthID forensic markers.

The results were clear: The White House image had been manipulated with Google’s AI. We published a story about it.

After posting the article, however, subsequent attempts to use Gemini to authenticate the image with SynthID produced different outcomes.

In our second test, Gemini concluded that the image of Levy Armstrong crying was actually authentic. (The White House doesn’t even dispute that the image was doctored. In response to questions about its X post, a spokesperson said, “The memes will continue.”)

In our third test, SynthID determined that the image was not made with Google’s AI, directly contradicting its first response.

At a time when AI-manipulated photos and videos are growing inescapable, these inconsistent responses raise serious questions about SynthID’s reliability to tell fact from fiction.

Initial SynthID Results

Google describes SynthID as a digital watermarking system. It embeds invisible markers into AI-generated images, audio, text or video created using Google’s tools, which it can then detect – proving whether a piece of online content is authentic.

“The watermarks are embedded across Google’s generative AI consumer products, and are imperceptible to humans – but can be detected by SynthID’s technology,” says a page on the site for DeepMind, Google’s AI division.